The Data Sparsity Trap: Why Current Ad Platform "Fixes" are Failing

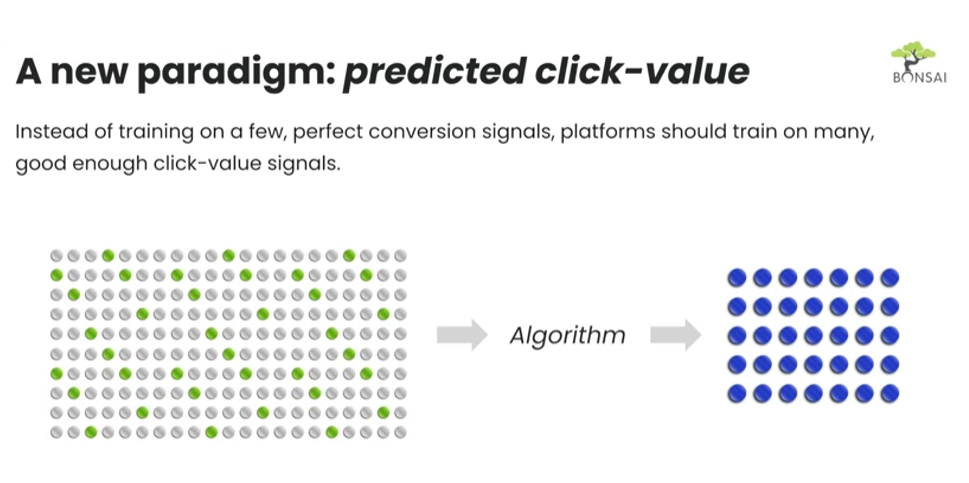

Instead of ad platforms training on a few "perfect" conversion signals, they should train on many "good enough" click value signals. This is where Bonsai Predictive Algorithms step in to bridge the gap.

If your website has a standard conversion rate of 1%, that means that for every one conversion influencing your ad buying, there are 99 clicks that could be valuable pieces of information—but they are being completely ignored by your bidding.

And that’s not the only issue. The lack of purity in conversion data can negatively impact business outcomes. In a case where ad conversions are mostly non-incremental, you’d be better off having no bid management at all.

So what is being done about it? There are a few solutions available on ad platforms, but I will argue that these solutions are faulty fixes.

Number one: Using attribution models to address the data sparsity issue. You may have implemented this using Google's Data-Driven Attribution (GA4 DDA), or perhaps by increasing your attribution windows in Meta ads. These strategies do improve data sparsity, but just not enough to make a real difference. Even if by using DDA, you improve attributable data volume by 5x, you're still leaving 95% of clicks on the table unused.

Number two: Taking your first-party business data to enhance those conversions. You may have done this using Google's Enhanced Conversions or Meta's Advanced Matching. These strategies can be a good way to identify and then remove those non-incremental conversions. But then, we're back to square one with data sparsity.

These solutions are not enough. What we need is a new paradigm. Instead of ad platforms training on a few "perfect" conversion signals, they should train on many "good enough" click value signals. This is where Bonsai Predictive Algorithms step in to bridge the gap.